Feature Toggles

Feature toggles are real assets to your software practice, right?

Absolutely, but without discipline, the aggregate complexity undermines system reliability.

Background

Feature toggles are dynamic behavioral control switches, typically set in a database table or in environment variables as name-value pairs. A change to the values can be made during a release or even outside of a deploy window. It could implement immediately, or require an app restart to effect the configuration change. A new variable obviously requires a software release to read it.

![]()

These are absolutely essential for Continuous Delivery (CD), but also very useful for:

- Staggered application releases for a major system-wide feature

- Experimental code and A/B variant testing

- Operational kill switches, kind of like manual circuit breakers to more gracefully degrade from a functional or load issue.

Martin Fowler's FeatureToggle article is a good intro. Pete Hodgson's article Feature Toggles dives deeper. It calls out four types of toggles, release, experimental, ops and permission, maps them in the longevity and dynamism dimensions, and digs into the management questions with specific recommendations.

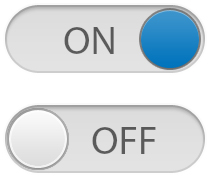

Configuration parameter types can also be more than just boolean (true or false). They could hold integers or strings, or more complex structures -lists and maps. Feature toggles, however, are generally boolean, in my experience for simple release flagging, but could be, say an integer with a user class, for a limited rollout / canary test.

Feature toggles are members of a broader class of configuration parameters (a.k.a. properties and variables) . It's useful to store the configuration externally from the software (See principle #3 Configuration in the 12 Factor App). Your app may use the same mechanisms to inject configuration, but we need to draw a line between feature toggles and environmental configuration, e.g. dependencies like URLs, credentials and the like. Externalization is absolutely necessary for portable images that can be promoted without change - build once, promote often?

Business rules can end up in configuration parameters. Perhaps you want want to experiment before locking in rules in code. For example, the minimum threshold for rockstar status in some social network. In this example, formalizing them in a data store is probably better than code, but that is more effort to extend the schema, write admin screens and so on. So these key-value pairs are quick ways of releasing with dynamic behavior.

Disaster

I was perusing Google's Site Reliability Engineering online book today and came across a great story on the danger of feature toggles. There is a footnote in the book to the US Security and Exchange Commission's Cease & Desist orders for Knight Capital, a market maker that lost $460 million in 45 minutes due to an automated trading system that ran off the rails. The SEC said Knight didn't have adequate protections in place to limit system errors.

It's a very readable post-mortem. A repurposed software toggle activated some ten year old unused feature, causing ruin. The developers of a new feature chose to reuse a previously used toggle. Turning the toggle on with a new release caused this 'Power Peg' code block to start executing runaway trades. Presumably they tested in lower environments with the toggle on, but the test context (customers, bids and asks, orders, exchanges, etc.) must have been different, and so the powder keg was untouched.

According to the Knight Capital Group Wikipedia page, Knight stock lost 75% overnight and the company needed a capital injection to survive (Jeffries stepped in to invest). Knight merged with Getco a few months later forming KCG holdings. The Cease & Desist order was from October 2013 and seems it didn't close the business but imposed a US $12 million fine and a slew of corrective measures.

My Experience

I've worked with a system that has many tens of toggles some dating back many years. These aren't documented for ops, much less product owners. They aren't addressed in feature or regression test strategies.

Also test coverage is an issue. I've never seen a slice of the possible combinations ever tested.

Think about just two boolean toggles and the test variations.

There are four of them: on-on, on-off, off-on, off-off. With tens or hundreds, and some having multiple values, comprehensive testing is impractical. Yikes.

Mitigation

Like many software engineering process challenges, we need communication and discipline to manage this complexity. My goals here are to:

- limit the active quantity

- communicate to all stakeholders the current active toggles

- communicate the intent, usage and interactions with other toggles

- communicate test coverage and therefore risk

To limit quantity, I agree with Mr. Hodgson we put constraints on longevity. We plan an expiry at its introduction. Give it a lifespan of one or two release cycles. If you release weekly, it could last for one or two weeks. A guideline is all that is needed, and no hard and fast rule.

When a feature is introduced into your dev cycle, put a string on your finger to remove it. We can make the extra cost of the flag explicit to product owners. Create another task/story/product backlog item/etc. to remove the toggle in your backlog. Assign it to a the release matching lined up with its expiry. Removing it means dropping it from the data store, and a code refactoring to delete the toggle check, if/else/block, etc. (please, no commenting - history is in our version control system). Or with some fancier toggling framework maybe it's a config file change. (Some framework options).

Communication can be solved with a lightweight document, a spreadsheet that tracks the current inventory with per toggle lifecycle status and plans.

Here are some columns I would use in this inventory spreadsheet:

- Name - could use dotted notation to create a namespace, e.g. {system}.{module}.{feature}.enabled

- Value - intended value, e.g. true, 100, orange, etc.

- Value Constraints - boolean, or integer ranges, or string enumerations, etc.

- Tested Scenarios - optional, but useful to assess risk of changing a value at run-time

- Description - human readable purpose and usage

- Related To - for other interaction with other parameters

- Add {env} - date, sprint or release name for each of your environments

- Remove {env} - planned or actual date, sprint or release name for each of your environments

Of course, you'll have a sync issue with your environments. Maybe you can do diffs before major milestones, like regression start or env. promotion, or better automate a transfer to your run time data store.

Now, what to do about test coverage? Since we don't know what will be configured in the future, we need to use our judgement to build the test strategy. The story acceptance tests can put in our expected coverage, especially for a non-boolean. More broadly on feature interaction, Mr. Fowler suggests that we need to test:

- all the toggles on that are expected to be on in the next release

- all toggles on

The premise here is that feature toggles are isolated in behavior. That may or may not be the case in your system.

In any case, I believe it should be raised up as concern in regression test planning. When you consider scope expansion (does it ever contract?) for a new release, make sure to consider the feature flag test coverage.

And on a related topic, the coverage of other configuration parameter variants, especially buisness logic, should be considered as well.

Conclusion

Feature toggles, and dynamic configuration in general, should be part of your design toolbox. But track them carefully.

Published Nov 9, 2017

Train switching levers image by Signalhead at the English language Wikipedia, CC BY-SA 3.0

Lever icon made by Lorc, Delapouite & contributors, CC 4.0 BY license

Toggle switches icon from Free Icon Library

Comments? email me - mark at sawers dot com